This paper was written in March 2015 for HF 700, Foundations in Human Factors Engineering, as part of the Master of Science in Human Factors in Information Design program at Bentley University.

“Bottom-up processing” is a term used to describe how the human nervous system detects signals and salience, but has not yet begun to extrapolate meaning from the data. After the senses gather and roughly organize the data, the brain begins to examine the information, which provides a meaningful interpretation of what is being sensed. This interpretation is aided by prior knowledge and is known as “top-down processing”. The prior knowledge that enables humans to understand a stimulus event is based on past experience that is stored in long-term memory (Wickens, Lee, Liu, & Becker, 2004, pp. 121, 125). The human brain can perform top-down processing quickly and effectively because it is highly organized, interconnected, and constantly evolving. Humans use conceptual structures to guide cognitive processes. Understanding a person’s mental models enable designers to predict how a person will interact with a product that a user has used before or has never seen -- this paper will examine the mental model of a person using a first-generation iPod for the first time.

Types of Conceptual Models

Humans want to understand everything, which leads us to look for causes of events and properties of objects so that we can form explanations. When we find a cause-and-effect chain or a list of properties that makes sense, we store them as a conceptual model for understanding future events or objects. These conceptual models are essential to understanding our experiences, predicting the outcomes of our actions, and handling unexpected occurrences (Norman, 2013, p. 57). Semantic knowledge is knowledge of the basic meaning of things, so human knowledge is organized into semantic networks where related pieces of information share related nodes and sections of the network (Wickens et al., 2004, p. 136).

A schema is one type of conceptual structure which makes it possible to identify objects and events (D’Andrade, 1992, p. 28). Simply stated, a schema is “a general knowledge structure used for understanding” (An, 2013). Schemas are stored in long-term memory as organized collections that are quickly accessible and flexible in application (Kleider, Pezdek, Goldinger, & Kirk, 2008). The general cognitive framework of a schema provides structure and meaning to social situations and provides a guide for interpreting information, actions, and expectations (Gioia & Poole, 1984).

Schemas about events are known as scripts (Kleider et al., 2008). Scripts are the most behaviorally oriented schemas, and are mental representations of sequences and events (Sims & Lorenzi, 1992, p.237). Script behaviors and sequences are appropriate for specific situations, ranging from the tasks required to tie one’s shoes to the expected “performances” in social situations, such as going to a restaurant, attending lectures, or visiting doctors (Gioia & Poole, 1984). Scripted behavior can be performed unconsciously, although active cognition is required during script development or when a person encounters unconventional situations (Gioia & Poole, 1984).

Mental models are schemas about equipment or systems (Wickens et al., 2004, p. 137). Mental models describe system features and assist in controlling and understanding a system. Mental models can begin as incomplete, inaccurate, and unstable, but become richer as a user gains experience interacting with a system (Thatcher & Greyling, 1998).

Highly Organized

Semantic networks, schema, scripts, and mental models are highly organized, and schemas are organized within a hierarchy (D’Andrade, 1992, p. 30). The conceptual systems and mental models in the mind are extensive, distributed throughout the brain, and organized categorically (Barsalou, 2008). Semantic networks have much in common with databases or file cabinets, where items are stored near related information that are then linked to other groups of associated information (Wickens et al., 2004, p. 136). Conceptual systems categorize settings, events, objects, agents, actions, and mental states (Barsalou, 2008). Mental models categorize information to create distinct sets of possibilities based on what a person believes to be true (Johnson-Laird, 2013).

Interconnected

The power of human mind is not in its capacity but in its flexibility -- most new concepts are made by assimilating minor differences into existing knowledge (Ware, 2012, p. 386). The conceptual system includes knowledge about all aspects of experience, including settings, events, objects, agents, actions, affective states, and mental states (Barsalou, 2008). Prior knowledge facilitates the processing of new incoming information because it provides a structure into which the new information can be integrated (Brod, Werkle-Bergner, & Shing, 2013).

The mind groups or “chunks” large numbers of attributes into a single gestalt. For example the configurational attribute of “dogginess” is a configuration of many individual recoded attributes, such as nose, tail, fur, and bark. A collie is a kind of dog, so a collie inherits all of the attributes included in chunked quality “dogginess” (D’Andrade, 1993, p. 93). The networked nature of prior knowledge means that once a concept is activated, then other related concepts become partially activated, or primed (Ware, 2012, p.386). The strongly interconnected pattern of elements can be activated with minimal input (D’Andrade, 1992, p. 29), and conceptual systems generate anticipatory inferences (Barsalou, 2008).

Constantly Evolving

The brain is not like a camera that captures and collects holistic images. Instead the conceptual system is a collection of category knowledge that contains a powerful attentional system that focuses on individual components of experience and establishes categorical knowledge about them (Barsalou, 2003, 2008). New information is consolidated into long-term memory when the mind actively processes the new information to integrate it with existing knowledge (Craik, 2002). Whenever the mind focuses selective attention consistently on components of experience, the mind develops knowledge of the category (Barsalou, 2003). Knowledge is constantly being accumulated because the mind is constantly detecting regularities in the environment (Brod et al., 2013). For example, when the mind focuses on a blue patch of color, the information is extracted and stored with previous memories of blue, which adds to the categorical knowledge of “blue.” Over time, the mind accumulates a myriad of memories in a similar manner for objects, events, locations, times, roles, and so forth (Barsalou, 2003). The mind develops complex concepts such as relations (e.g., above), physical events (e.g., carry), and social events (e.g., convince) through the same mechanism (Barsalou, 2008).

Categorization

Humans categorize all objects and events we encounter in the world. Categorization is essential for survival -- if you mistake a stove for a chair or tiger for a housecat, the consequence may be disastrous. Because of this survival need, the categorization of objects and events takes places unconsciously (Vecses & Koller, 2006, p. 17). As people process members of a category, they store a memory of each categorization event. When encountering future objects or events, people retrieve these memories, assess their similarity, and include the entity in the category if there is sufficient similarity (Barsalou, 1998). Categorical knowledge provides rich inferences that enable expertise about the world — rather than starting from scratch when interacting with something, a person can benefit from knowledge of previous category members (Barsalou, 2003).

Three models for categorizing objects and events are the exemplar, prototype, and classical models. In the exemplar model, people’s representation of an object is a loose collection of exemplar memories, and to categorize an entity, a person attempts to find the exemplar memory that is most similar to the entity. In the prototype model, a person extracts properties that are representative of a category’s exemplars and integrates them into a category prototype. In the classical model, an entity must meet certain rules to qualify for membership (Barsalou, 1992, pp. 26-29).

Affordances

People interpret and categorize entities to determine if they can operate on their environment (Ware, 2012, p. 18). People perceive not only features of objects, but also information about how to interact with them (Apel, Cangelosi, Ellis, Goslin, & Fischer, 2012). The term “affordances” describes perceived possibilities for action offered by objects. A cup, for example can be used for drinking or it can be used for catching a spider — a cup affords both of these actions (Humphreys, 2001). Affordance is not a property of an object, but a relationship that depends on the object, the environment, and the agent’s mental models (Norman, 2013, p. 11).

Case Study - First Generation iPod

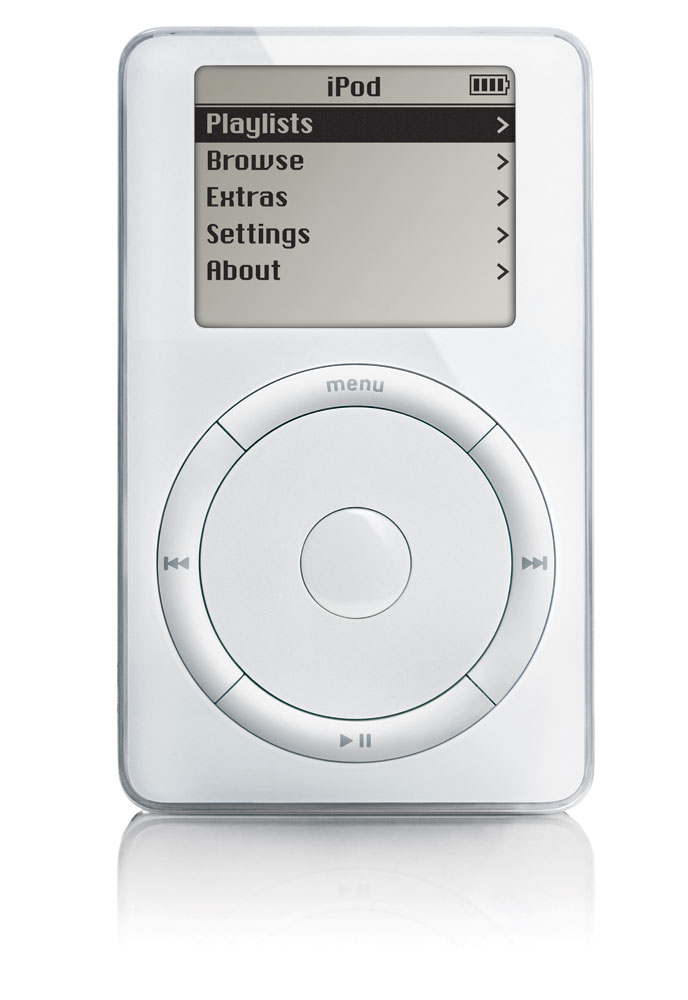

Apple released the iPod on Oct 23, 2001. Before the iPod, the mass consumer market was familiar with portable music players in the form of cassette players, CD players, and MP3 players (images in Appendix 1). Sony’s Walkman cassette player was released in 1980 and featured buttons for play, stop, fast-forward, rewind, open, and a slider for volume. Sony introduced the Discman in 1984 and it featured buttons for play/pause, next song, previous song, stop, play mode, repeat/enter, open, and a slider for volume. The first commercially successful MP3 player was the Diamond Rio introduced in 1998, which featured buttons for play/pause, stop, next track, previous track, volume up, volume down, random, repeat, A-B, and hold.

The iPod has buttons for play/pause, next song, previous song, menu, a scroll wheel, and an unlabeled button in the center of the wheel that means “select” or “enter.” After clicking “menu,” a user scrolls the wheel clockwise or counterclockwise to navigate up or down the menu, respectively, and clicks the unlabeled “enter” button in the center to choose a line. While a song is playing, the user scrolls the wheel to turn volume up or down.

Jakob Nielsen’s “Law of Internet User Experience” states that users spend most of their time on websites other than yours, and people expect websites to act alike because their mental model is based on what they think they know about a system (Nielsen, 2010). A designer can replace “websites” with “products” and infer that they must determine what a user knows and then design their product to mirror that knowledge. This will prevent the user from “thrashing about” the product randomly pushing buttons, which increases anxiety.

First-time iPod users expected to see buttons that are common across portable music players, such as play, pause/stop, forward, and backward. These functions have always been buttons in other music players and are frequently used, so keeping them physical buttons was designing within a known mental model. Apple mirrors the common mental model by offering physical play/pause, next, and previous buttons. Volume has been a slider, scroll wheel, or set of buttons on previous players, so while users expect to be able to change the volume, there was no set model for how to manipulate volume. The MP3 player category introduced the new functionality of being able to store individual songs from many albums or artists, rather than playing a single album on a cassette or CD. A first-time user has no mental model for how to navigate through menu options or change the volume in the iPod.

The menu button, scroll wheel, and select button are new to the iPod, but Apple used signifiers effectively to help first-time users understand affordances and map a model of how to use the iPod. Where affordances show what actions are possible, signifiers show where the action should take place. To be effective, affordances and anti-affordances have to be discoverable and perceivable, which these all are (Norman, 2013, pp. 11, 14). The menu button is prominent at the top of the wheel and is a natural place to begin exploration. The first click of “menu” shows users the options (artists, albums, etc.). When the user is further into the menu or playing a song, clicking “menu” brings up the most recent menu screen, and subsequent clicks of “menu” navigate up the hierarchy until the user reaches the home screen. At the home screen, clicking “menu” causes no effect, which is an anti-affordance that informs the user that this is the top-level folder. The five buttons at top, right, bottom, left, and center provide haptic feedback by clicking in and out upon being pressed. This haptic feedback provides clear signals to the user that the button has been pressed, so they will not question if they performed the action correctly.

While the menu button has an obvious purpose, using the scroll wheel to navigate up and down the menu is not obvious because an unlabeled circle does not signify scrolling in a clockwise or counterclockwise pattern. Because the center button is unlabeled, its purpose is unclear, although it is raised from the scroll wheel, which is a common design pattern for buttons, and helps communicate to the user that it affords pressing. The user must either see a person scroll the wheel in real-life or advertisements, or stumble across this discovery on their own. The exploration that leads to discovery is likely because, according to the Fogg Behavioral Model, motivation and ability trade off, and the motivation to use this device is high enough to overcome the cost of clicking a few buttons to explore the reaction (Fogg, 2009). Apple’s minimalist design limits the possibilities for action so the user has very few choices of what to click, and the instant feedback helps to rapidly create mental models of what is possible for first-time users.

Appendix 1

Sony Walkman cassette player, 1980

Sony Discman CD player, 1984

Diamond Rio MP3 player, 1998

Apple iPod, 2001

Bibliography

An, S. (2013). Schema theory in reading. Theory and Practice in Language Studies, 3(1), 130-134.

Apel, J. K., Cangelosi, A., Ellis, R., Goslin, J., & Fischer, M. H. (2012). Object affordance influences instruction span.Experimental Brain Research, 223(2), 199-206. doi:http://dx.doi.org/10.1007/s00221-012-3251-0

Barsalou, L.W., (1992). Cognitive Psychology: An Overview for Cognitive Scientists. Hillsdale, NJ: Lawrence Erlbaum Associates.

Barsalou, L.W. (2003). Situated simulation in the human conceptual system. Language and Cognitive Processes. 8(516), 513-562.

Barsalou, L.W. (2008). Cognitive and neural contributions to understanding the conceptual system. Current Directions in Psychological Science. 17(2), 91 – 95.

Barsalou, L.W., Huttenlocher, J., & Lamberts, K. (1998). Basing categorization on individuals and events. Cognitive Psychology. 36(3), 203-272. doi: 10.1006/cogp.1998.0687

Brod, G. Werkle-Bergner, M., & Shing, Y.L. (2013). The influence of prior knowledge on memory: A developmental cognitive neuroscience perspective. Frontiers in Behavioral Neuroscience. 7, 139. doi: 10.3389/fnbeh.2013.00139

Craik, F. I. (2002). Levels of processing: Past, present ... and future?. Memory, 10(5/6), 305-318. doi:10.1080/09658210244000135

D'Andrade, R. G. (1992). Schemas and motivation. In R. G. D'Andrade and C. Strauss (Eds.) Human Motives and Cultural Models. Cambridge: Cambridge University Press.

D'Andrade, R. G. (1993). The Development of Cognitive Anthropology. Cambridge: Cambridge University Press.

Fogg, B.J. (2009). A Behavioral Model for Persuasive Design. Retrieved from http://bjfogg.com/fbm_files/page4_1.pdf

Gioia, D.A., & Poole, P.P. (1984). Scripts in Organizational Behavior. The Academy of Management Review. 9(3), 449-459.

Humphrey's, G. (2001). Objects, affordances...action! Psychologist, 14, 408. Retrieved from http://search.proquest.com.ezproxy.babson.edu/docview/211831140?accountid=36796

Johnson-Laird, P.N. (2013). Mental models and cognitive change. Journal of Cognitive Psychology. 25(2), 131-138. doi: 10.1080/20445911.2012.759935

Kleider, H. M., Pezdek, K., Goldinger, S. D., & Kirk, A. (2008). Schema-driven source misattribution errors: Remembering the expected from a witnessed event. Applied Cognitive Psychology. 22(1), 1-20. doi:10.1002/acp.1361

Nielsen, J. (2010, Oct 18). Mental Models. Retrieved from http://www.nngroup.com/articles/mental-models/

Norman, D. (2013). The Design of Everyday Things Revised and Expanded Edition. New York: Basic Books.

Sims, H. P., Jr., & Lorenzi, P. (1992). The new leadership paradigm: Social learning and cognition in organizations. Newbury Park, CA: Sage.

Thatcher, A., & Greyling, M. (1998). Mental models of the internet. International Journal of Industrial Ergonomics. 22(4-5), 299-305. doi: 10.1016/S0169-8141(97)00081-4

Vecses, Z., & Koller, B. (2006). Language, mind, and culture: A practical introduction. New York: Oxford.

Ware, C. (2012) Information Visualization, Third Edition: Perception for Design (Interactive Technologies). Waltham, MA: Morgan Kaufmann.

Wickens, C., Lee, J.D., Liu, Y., & Gordon, S.E. (2004). An introduction to human factors engineering (2nd ed.). Upper Saddle River, N.J.: Pearson Prentice Hall.